One year on and there's been a slew of articles revisiting the events of the past year. I was going to ask what has prompted this little flurry, but it's obviously the anniversary thing. With increasing pressure for a public inquiry, it seems that some of the key players have been trying to position themselves favourably, so let's have a look at what's been written, versus what the contemporaneous documentation actually says. SAGE minutes can be found here, I think (I downloaded the relevant docs a while back).

The first article I noticed was Laura Kuenssberg's “Inside Story”. She “talked to more than 20 of the people who made the life and death decisions on Covid”. The relevant passage that I am interested in concerns the decision making around mid-March:

“13 March, the government's Scientific Advisory Group for Emergencies (Sage) committee concluded the virus was spreading faster than thought.

But it was Downing Street "modellers in the building", according to one current official, who pored again over the numbers, and realised the timetable that had only just been announced was likely to result in disaster.

The next morning, a small group of key staff got together. Simple graphs were drawn on a whiteboard and the prime minister was confronted with the stark prediction that the plan he had just announced would result in the NHS collapsing under the sheer number of cases.

Several of those present tell me that was the moment Mr Johnson realised the urgency - that the official assumptions about the speed of the spread of this new disease had been wrong.

[...]

On 16 March, the public were told to stop all unnecessary social contact and to work at home if possible.

[...]

For many inside government, the pace of change that week was staggering - but others remain frustrated the government machine, in their view, had failed to move quickly enough.”

The narrative being presented here of ponderous government is significantly misleading.

The govt claimed at the time to be paying close attention to the scientific advice from SAGE, and the specific change to SAGE's assessment on the 13th March was not that the disease was spreading any more rapidly, but merely that the number of infections was higher than previously thought (due to greater importation from abroad). This is a key distinction that anyone numerate should be able to grasp readily. To quote from SAGE minutes on the 13th:

“Owing to a 5-7 day lag in data provision for modelling, SAGE now believes there are more cases in the UK than SAGE previously expected at this point, and we may therefore be further ahead on the epidemic curve, but the UK remains on broadly the same epidemic trajectory and time to peak.

[...]

SAGE was unanimous that measures seeking to completely suppress spread of Covid- 19 will cause a second peak.”

Changing the estimate of the number of cases just brings the peak forward by a few days. Even a factor of 2 is only a single doubling time which they thought to be about 5-7 days at that time. Changing the estimate of the growth rate could (and in fact did) change the timetable and urgency much more significantly, but this didn't happen for another week and a half.

It is not clear who “the modellers in the building” refers to in Kuenssberg's piece, but they are clearly not SAGE. Maybe Cummings had run a few numbers on a spreadsheet but since SAGE was supposed to be an assembly of world-leading experts, it would hardly be appropriate to discard their analyses in favour of his. For that matter, I had also blogged that the mitigation plan was likely to overwhelm the NHS (a conclusion that I reached around the 9th March based on some very simple calculations) but I wouldn't expect Johnson to listen to me either. SAGE minutes are very clear that they still believed the doubling rate to be 5-7 days right up to the 18th March and had described any overload on the NHS as being some way off (albeit a looming problem that would need addressing at some time in the future). They were unanimously (see above) opposed to suppression at this point.

On the 16th, the SAGE meeting changed its advice somewhat and suggested that some social distancing measures (but not school closures) should be implemented promptly:

“SAGE advises that there is clear evidence to support additional social distancing measures be introduced as soon as possible.

[...]

SAGE will further review at its next meeting whether, in the light of new data, school closures may also be required to prevent NHS capacity being exceeded.”

Clearly there was some increased urgency here but NOT any indication that the NHS was under immediate threat, in direct contradiction to Kuenssberg's unattributed claim above that “the prime minister was confronted with the stark prediction that the plan he had just announced would result in the NHS collapsing under the sheer number of cases.” I'm not saying it is impossible that anyone said such a thing, but if they did, they were an isolated voice and certainly not representative of SAGE as a whole.

Immediately following the SAGE meeting on the 16th, the Govt did of course request that people avoid all unnecessary social contact. Admittedly, this instruction had neither legal force nor economic support at that point but SAGE was obviously reasonably satisfied with the adequacy of this plan as can be seen from their

minutes of the 18th (at which time they also recommended school closures):

“SAGE advises that the measures already announced should have a significant effect, provided compliance rates are good and in line with the assumptions. Additional measures will be needed if compliance rates are low.”

So it was only in the case of poor compliance that additional measures would be required.

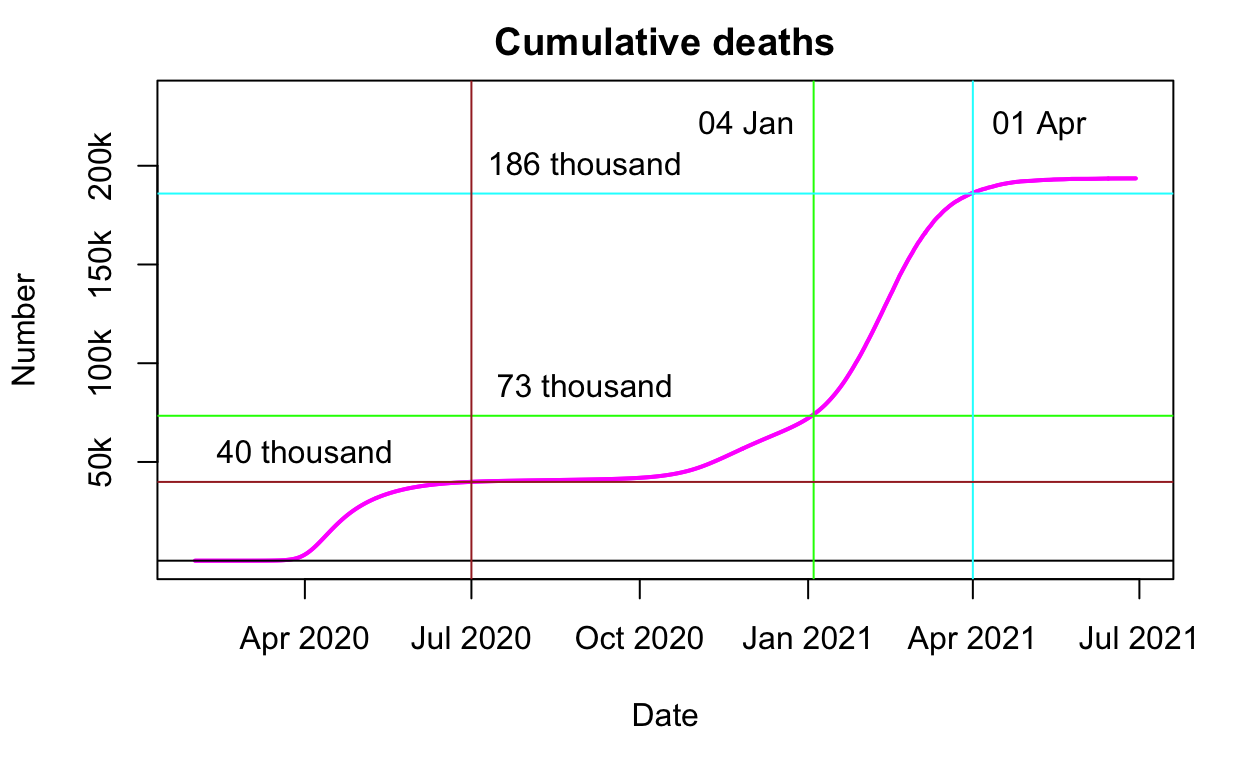

There was no SAGE meeting between 18th and 23rd, which was unfortunate in the circumstances (21-22 being a weekend). On the 23rd, SAGE finally realised that they had got the R number wrong and that as a result the doubling time was much shorter than had been previously believed, making the situation quite desperate. Specifically, the

SAGE meeting of the 23rd concluded: “Case numbers could exceed NHS capacity within the next 10 days on the current trajectory” and this statement must be understood in the context of the immediately preceding

20th March SPI-M meeting which noted both: “Any measures enacted would take 2-3 weeks to have an impact on ICU admissions” and also: “If the higher reproduction number is representative of the longer term, then it is likely that additional measures will be required to bring it below one”.

Thus SAGE's underestimate of the R number didn't just mean that the epidemic was coming faster and harder than previously thought: another consequence is that actions that would have been adequate for R=2.4, might not be adequate for R=3. It is quite understandable that this caused alarm within SAGE, but it only happened on the 23rd.

The Govt imposed a legally-enforceable lockdown with much more far-reaching restrictions immediately that evening (23rd March).

Moving on to the next article, in

the Guardian, a hagiography of Patrick Vallance:

“But it now seems clear that Boris Johnson, and his advisers, were slow to heed Vallance’s early advice.

Before the 16 March press conference, Vallance chaired a meeting of the Scientific Advisory Group for Emergencies (Sage) in which a collection of experts had advised that the first lockdown should begin immediately.

Johnson did not announce the unprecedented national lockdown until a week later on 23 March in a primetime TV address to the nation.”

This is simply not true as documented above. SAGE asked for relatively modest action around the 16-18th, and the Govt responded promptly. SAGE explicitly assessed on the 18th that the actions were probably adequate and it was only on the 23rd when they realised that they had got the doubling time wrong, that they suddenly realised they had a much larger and more urgent problem on their hands. Vallance also got this wrong in his appearance before the House of Commons Select Committee on Science and Technology.

Most recently, a podcast on the Guardian consisting of an interview of Neil Ferguson. He points very firmly to the data about higher case numbers due to greater importation being what drove the accelerated decision making in mid-March (NB this view is very different from Vallance who very emphatically linked the change in policy advice to the revision of the estimated doubling time - it is simply not possible for both Ferguson and Vallance to both be correct about this). Ferguson mentions this being discussed in the “first weekend in March” which I'm sure must be a simple slip as this would be 7-8th March whereas on the 10th and even 13th SAGE seems pretty sanguine about the situation and does not suggest any need to take immediate action. Assuming he meant the 14-15th March instead, this is far more consistent with SAGE as the minutes of the 16th do certainly suggest some some action should be taken in the light of the new data:

“The science suggests additional social distancing measures should be introduced as soon as possible.”

When asked specifically (at 11m20 in the podcast) “were scientists telling ministers to go earlier?” Ferguson firstly points again to the surveillance data as escalating the decision making process, and then coyly says it was entirely in the Govt's hands as to what actions they took. He could have said, but chose not to, that the Govt followed SAGE's advice promptly and to the letter. And the interviewer didn't pursue the point. While the improved surveillance data undoubtedly played a role in the process, the urgent advice for the most stringent controls only came on the 23rd as a result of the revised estimate of doubling time. You only have to glance at the SAGE minutes to see that they were not shy about offering policy advice throughout the outbreak.

At 16m40 onwards the interviewer says, with reference to the situation in September after schools reopened:

“...once again the advice from scientists was to lock down. But that advice was not heeded. Did that delay once again lead to a higher death rate than we might have seen?”

Without getting into the September story here, any delayed response from the Govt (which I don't dispute was evident in the autumn) could only “once again” have resulted in a higher death rate if there had also been a delayed response to advice to lock down in March. Which there was not, according to the evidence I have outlined.