I was listening to a recent More or Less which had a piece about statistical significance. The guest was Stephen Ziliak who has a book on the topic. I actually thought he gave a slightly confusing account of the limitations of significance testing ("likelihood of the magnitude"?). His book also has a lot of hostile reviews on Amazon suggesting it reads a bit like a blog rant. Perhaps this Gerd Gigerenzer article is better written.

The reason for the More or Less article was a recent US Supreme Court decision that medical trial results could not be brushed under the carpet simply due to their being "statistically insignificant". In the case in question, it seems that there might have been prior reasons to suspect side effects of the type observed, so the fact that they had not (at that time) reached an arbitrary threshold is not adequate justification for concealing them.

I've mentioned before, IMO most of the confusion over significance testing is that the p-value actually doesn't answer the question people are interested in (probability of a hypothesis being true), but is routinely misinterpreted in that way. The same confusion extends to confidence intervals, of course, and these errors are routinely found even in articles that claim to be authoritative (eg and of course also here). But I wouldn't call it a cult, it's more likely to be down to confused thinking and laziness on the whole.

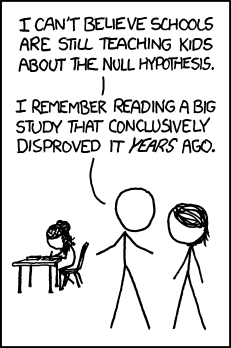

And also, as several people spotted, on xkcd:

7 comments:

Dear James,

Confusing is my middle name so no problem there.

But I am eager to see your proof that distinguishing a likelihood (Fisher style) from a likelihood of a magnitude of some human or scientific importance is confusing and not relevant to science.

Sincerely,

Steve Ziliak

See this:

http://xkcd.com/892/

I don't agree with everything that Ziliak and McClosky say in The Cult. But judging their book on the basis of Amazon reviews is rather like judging your girlfriend on the basis of bathroom graffiti. Why not open the cover and judge for yourself?

Steve, I don't disagree with you on the fundamentals. I just thought (and said) that the interview was unclear. Your summing up the point with "there's a big difference between magnitude, and likelihood of magnitude" is hard enough to parse even (probably) knowing what you meant! And that was by no means the only place where the wording seemed unhelpfully obscure to me.

Anon, I don't judge the book, but merely pointed to the reviews. I suppose to be fair I could have also mentioned that there were good reviews too, but I did give a link and the level of hostility for a popular stats book is fairly notable - compare this by Gigerenzer for example. I already know (and broadly agree with) the main points so it's not high on my to-read list.

Thanks Rattie - you're not the only one to have spotted that!

Put it this way, how confident do you have to be about a proposition to bet on it?

Depends how much we're betting.

As Groucho put it "You bet your life"

Post a Comment